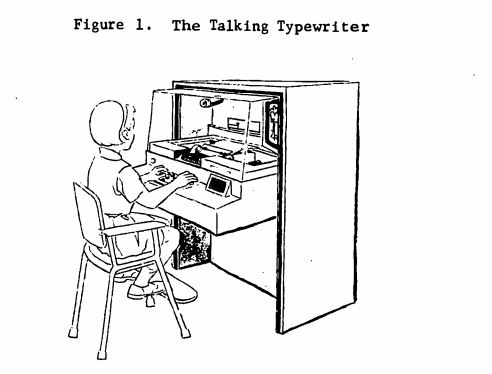

Followers of my social media might be aware that I’m presently working on a project concerning the history of educational technology and its consequences for the perceived cultural and discursive connection between disability and the mechanical. Part of this work centers on a series of experiments carried out using the Edison Responsive Environment, perhaps better known as the “Talking Typewriter. While I’ve been working on this project, I’ve discovered a few curiosities that likely won’t make it into any paper that emerges from my research, but nevertheless captivate me sufficiently to cause me to write about them. Thus, I’m going to be posting some brief notes about some of these tangents, because I suspect I’m not the only one who might find them interesting. These are sketches, perhaps ramblings, but certainly not fully developed thoughts.

One of these tangents is the process by which the ERE was programmed; while the hardware aspects of the ERE are well documented (see Lockett, 2019 for a contemporary treatment) the specifics of developing ERE software deserve attention in part because it offers a window into how, before the emergence of standardized means, we set about constructing machines for personal computing.

I’m interested particularly in an article* by J.R.W. Hill entitled “The Preparation of Programs for the Edison Responsive Environment,” which is fortunately for our puposes exactly what it claims to be. That Hill wrote and published about the very act of programming signals two things: first, that the ERE was a unique enough device even in an era in which programmable computers were largely familiar, and second, that the act of programming could have been an unfamiliar enough practice to Hill’s audience of educators, sociologists, and anyone who might be interested in leasing or buying an ERE other stakeholders, that it merited special consideration in its own right. Hill’s paper is simultaneously instructional and descriptive; think of it as the K&R C for the bespoke language the ERE speaks.

One thing that appears unique about the ERE is the medium on which programs were recorded. “The basis for all E.R.E. programs,” notes Hill, “is a series of codes which are recorded on the back of a program card on magnetic tracks” (Hill, 1970, p. 288). The use of a magnetic medium is familiar enough to most people who have worked with present-day computing technology, but the use of a flat sheet appears, at least to our sensibilities, somewhat unfamiliar. However, of the technologies developed out of the ERE, this magnetic sheet technology would find adoption in the “talking page,” a less complex and non-interactive piece of reading instructional tech. The YouTuber Techmoan has covered the technical aspects of a similar product from 3M here; the details are different but the general principle of recording voice and other data on a flat magnetic sheet.

The machine language of the ERE consists of character literals sent to the typewriter, as well as control seqeunces for the typewriter and other peripherals, giving us a vocabulary as follows:

- PH: Pointer hold

- PA: Pointer advance

- KV: Key Voice (takes on/off as a parameter)

- PR: Toggle slide (takes on/off)

- CV: Play recorded audio (takes 1/2 as a parameter to select audio program)

- LC: Set lowercase

- Any character reproduces that character on the typewriter paper

In some ways, this sparse language isn’t too far removed from existing machine languages for then-extant general purpose computers, nor is it too different from the machine languages of the early microcomputers. What I find of interest here is that, unlike trying to get my head around how to write machine language code for, say, the Commodore 64, the various relations between opcodes and elements of the machine are visible in real space on the ERE. If, for example, I’m going to send an instruction PR FOR to the machine, that clearly and tangibly advances a slide projector that I can see in operation. There’s a tangibility present here that isn’t present in the process of programming a conventional computer, and acknowledging this gestures toward a dual responsiveness in the ERE: it creates a responsive environment for the user by its design, and creates one for the programmer in the way taht a limited vocabulary interacts with observable objecrts directly in a manner observable without additional equipment. Hill also considers the skillset of the effective ERE programmer to extend beyond the mere techincal preparation of program:

It is relatively easy to master the operations involved. In addition, however, there is room for the exercise of considerable artistry, not only in the preparation of illustrations for slides, but in the way in which voice messages are recorded. It is obvious that any messages of instruction which are to be played back, to the learner must be delivered with clarity; what is not so obvious is that the rate of delivery and style of speaking may also be critical factors in gaining the pupil’s interest and cooperation (Hill, 1970, p. 291).

This recognition of the artistry of ed tech development is notable in part as a prefiguration of commonplace advice for teachers who use multimedia, but also as a statement of (Hill’s understanding of) the role of a programmer as a creative worker whose work has, and is accountable to, a broader social good.

Part of what draws me toward the ERE is that it represents an early use of computing as a social good, as something separate from commerce, warfare, and the kind of science that underpins those categories. The separation isn’t as neat as perhaps I’d desire – no small portion of the funding for experiments using the ERE came from the US government’s Head Start program – but what I’m finding as I read the words of those involved in this work is that, at least in their writing they appear to believe, to varying degrees, in the stated aim of creating an autotelic responsive environment for the purposes of understanding how children learn and how to teach them more effectively. I confess a bit of utopian daydreaming here; I’m already staking out a bold claim in the main project that the work around the ERE formed an oppositional strategy to the hegemonic behaviorist approach to teaching (particularly disabled, especially autistic) children; I might leave this train of thought about the role of the programmer to ripen on the vine for a bit. Nevertheless, I hope these notes are generative, at least to myself!